I often think about an afternoon many years ago when I took my daughter to our local coffee shop to treat ourselves to a special dessert. She was around four or five years old, and as she stood in front of the enormous display of pies, cakes and puddings, she became overwhelmed and said, “What to choose? There is too much of much!” Too much of much... I found such meaning in those unexpected words and as a result, the phrase has stayed with me throughout the years.

.png?width=400&name=Too%20Much%20of%20Much%20(1).png)

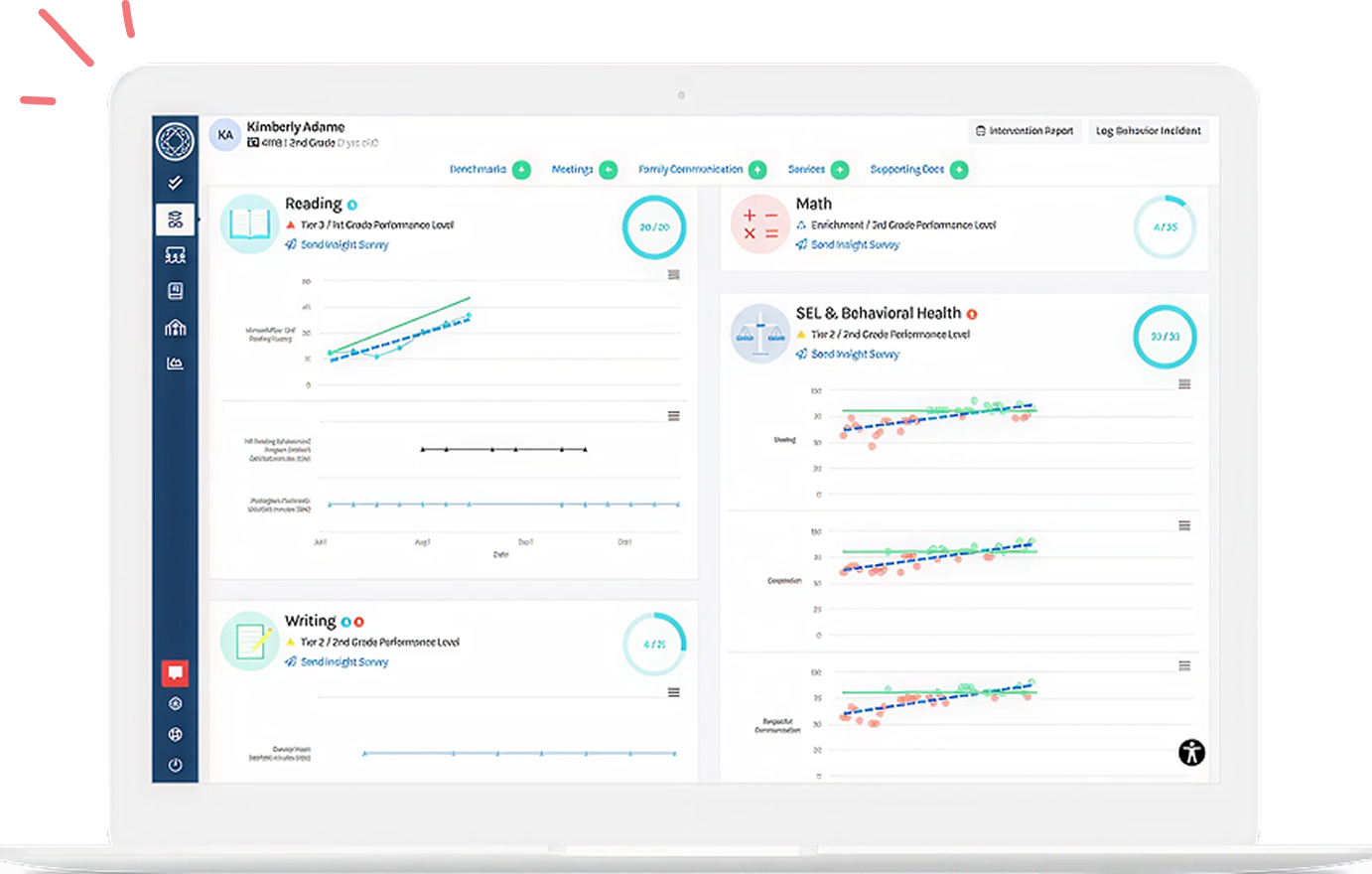

Fast forward to today - I work with schools and districts across the country as an educational consultant with a focus on RTI/MTSS (Response to Intervention/Multi-Tiered System of Supports). Through my work, my clients learn how to navigate through what must at first feel like a quagmire of data as they learn to transition from outdated intervention systems to a more comprehensive MTSS approach. As they embark upon this work, teachers can initially become overwhelmed when trying to use data to guide their lesson planning and when determining what data to bring to collaboration meetings with their colleagues. In addition, it can also be difficult for my clients to sift through the massive number of interventions found on the web in order to match evidence-based plans to their student’s specific areas of demonstrated need. And the search does not end there-once they find interventions and carry them out, what data should they use to determine if intervention is working (and what is not working)?

All educators universally experience the “too much of much” feeling at some point in their work experience. As a result, whether teaching remotely or in person, this topic is frequently discussed during my client meetings as we prepare for their professional development, even though they understand that analyzing and utilizing student data is critical to their work. To state the obvious, data use in schools can simply inundate without the use of some basic guidelines. Consequently, I use the five essential questions listed below to guide our “too much” discussions in order to foster a seamless, data-driven culture in their schools, and to reduce feelings of being overwhelmed.

Essential Questions:

Question #1: What is the current culture around data in my building?

Often schools and districts have many different assessment protocols and interventions scattered throughout their buildings. Assessment protocols can include the use of universal screeners, progress monitoring tools, curriculum-based assessments, intervention subscriptions and more. As these protocols build up over the years, a phenomenon sometimes referred to as the “Christmas Tree School'' occurs. Essentially, the “tree” is the “school” and there is a different ornament, garland, and string of lights layered onto the tree for each and every protocol in the building. These layers of protocols build up over the years and make it very difficult to see what is taking place within the “tree” (or school). As a result of this occurrence, many of my clients do not have a clear understanding if there are any consistent assessment protocols or available tools for intervention in their buildings. Until we develop comprehensive and consistent MTSS protocols together, I always begin by asking what the culture around data is in my client’s buildings. Does the building resemble a “Christmas Tree School”? Do teachers feel comfortable discussing data and do they have student data generated from valid and reliable sources? Is there a point person in the building to support teachers with assessment and intervention? If there is not, my first step is always to suggest that a RTI/MTSS/Student Support Team coordinator be appointed in each building. Until this role exists, teachers can begin simply by asking questions of their colleagues, such as “What data do you have access to review for your students?”

Question #2: How does the data show me who needs support and if core instruction is working? Which data do I use and why?

Core curriculum must have a high probability of success for 80% or more of my students. If I am not meeting 80% or more of my students, I must ask myself if I am implementing core instruction with fidelity. This may also be the time to evaluate if I am consistently using best practices in my lessons such as providing students with frequent feedback and flexible grouping. Other best practices to consider are the use of normed universal benchmark screeners. When benchmarks are given in the fall, winter and spring, teachers can quickly identify their student’s areas of strength, as well as their areas of need. These universal screeners can also help determine if the core is meeting 80% of my student’s needs by analyzing screening data to look for trends or patterns in each topic area. Many universal screeners also have accompanying progress monitoring tools for following up with students presenting with areas of need (see Question #5 below).

Question #3: Once I know which students need support, how do I match evidence-based intervention support to specifically meet their need(s)?

Intervention support should be as robust as possible to meet student’s specific areas of need. In order to avoid Googling endlessly to determine if a favorite intervention is research-based, check out ESSA (the Every Student Succeeds Act). This is a very helpful tool as ESSA was developed to help educators select interventions that are grounded in research. The duration of an intervention is based upon student need and the research-driven recommendations.

Question #4: What data should I use to determine if the interventions I provide are working?

Progress monitoring assessments should be administered weekly or biweekly, depending upon the student's needs, during small group or intervention time. Creating time and space for the practice of reviewing my student’s progress monitoring data regularly will help me to determine if the intervention(s) I am providing are making a positive impact and working. When I see growth in my student’s progress monitoring data, this could be a good indication that I am on the right track and that I should build upon this success. If my student’s progress is stagnant (or declines), this could mean I may need to pivot into a different intervention activity better matched to my student’s needs or I have focused on a single intervention activity for too long.

Related resource: How to use progress monitoring data to guide decision making in an MTSS practice

Question #5: Our student support team meeting time feels redundant and overwhelming-how can we best use this time? Is there a model that works best? And who should be attending these meetings?

So many of my clients have expressed concern about the time they spend in long student support meetings, discussing one student at a time, and feeling like they are not making any real progress. These meetings can be trimmed to reduce redundancy and to be as efficient as possible by moving to the following three student support meeting types. By transitioning to this streamlined approach, all extraneous meetings can be cancelled as student needs should be covered in one of the following three meeting types:

(Meeting Type 1) Grade/Content Community Meetings facilitated by grade level/content level teams can be held monthly to create intervention group plans, identify patterns of need within the grade and/or content area, and monitor student progress.

(Meeting Type 2) Student-Specific Support Team Meetings facilitated by assigned teachers can be held either weekly or biweekly (depending upon student’s needs) and used to create and evaluate plans for individual students.

Finally, (Meeting Type 3) School Leadership Meetings facilitated by leadership can be held three times a year after each benchmark screening period. These meetings should be used to evaluate tier movement, growth, and equity of tiers across the school.

It is critical to bring student’s benchmark screening and progress monitoring data to each meeting type to look for patterns or trends and evaluate areas of need.

These five essential questions can be used as a springboard to promote an efficient, data-driven culture at school and eliminate some of the “too much of much” effect. Please respond with tips and/or questions that work for you in your school!

Check out this on-demand webinar recording, on progress monitoring in RTI/MTSS

![[Guest Author] Deanne Rotfeld Levy, M.A.T.-avatar](https://www.branchingminds.com/hs-fs/hubfs/Team/Deanne%2070x70.png?width=82&height=82&name=Deanne%2070x70.png)

About the author

[Guest Author] Deanne Rotfeld Levy, M.A.T.

Deanne Rotfeld Levy is a consultant for Branching Minds regarding MTSS best practices. Deanne is also a University Supervisor at the National College of Education at National Louis University. Deanne previously served as Vice President of Customer Success for Discovery Education and was a Chicago Public Schools special education teacher and case manager. Deanne holds a Master of Arts in Teaching Special Education from National Louis University.

Your MTSS Transformation Starts Here

Enhance your MTSS process. Book a Branching Minds demo today.

.png?width=640&name=Zoom%20header%20(5).png)

.png?width=716&height=522&name=Understanding%20Literacy%20Basics%20(Preview).png)