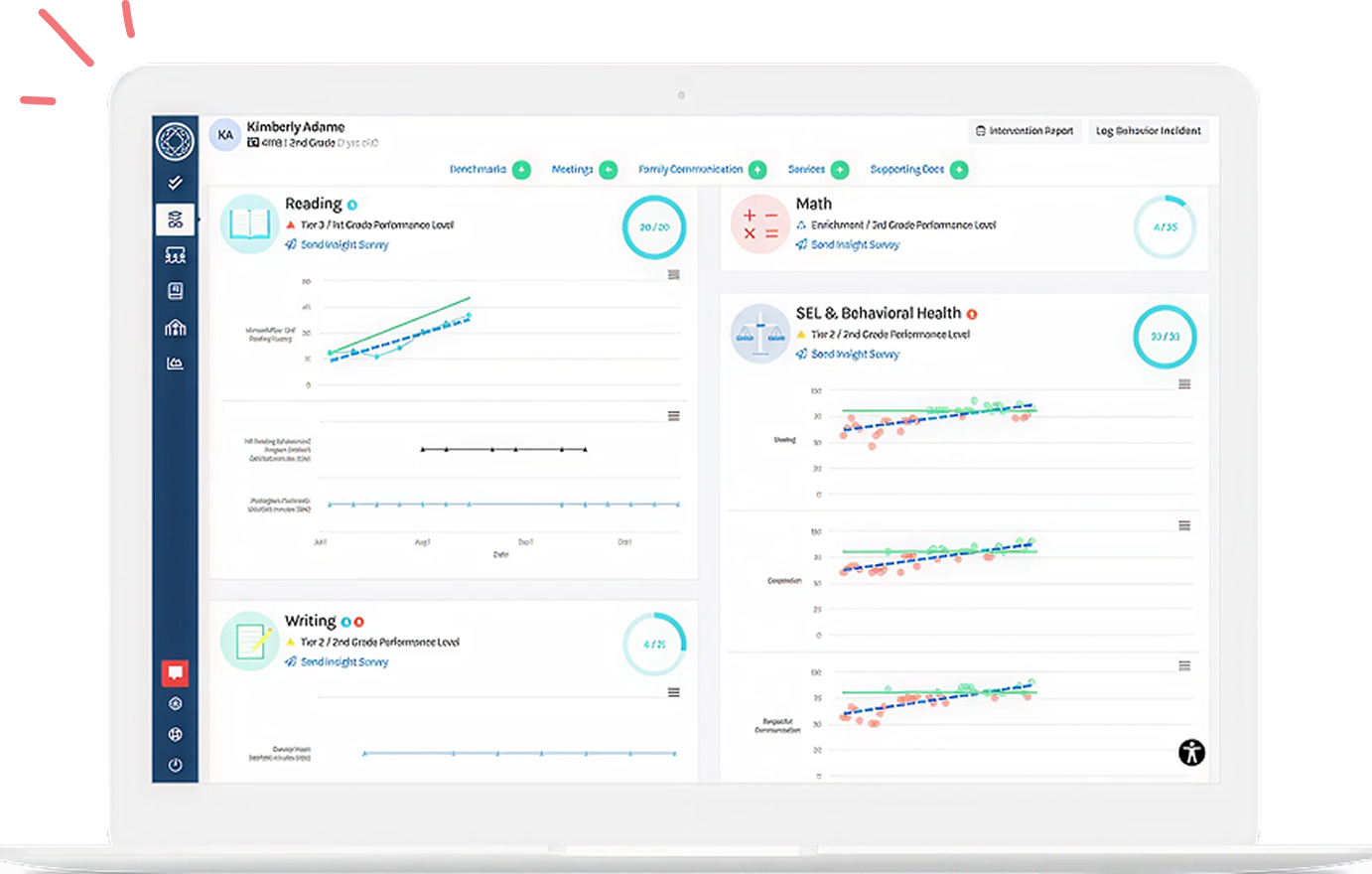

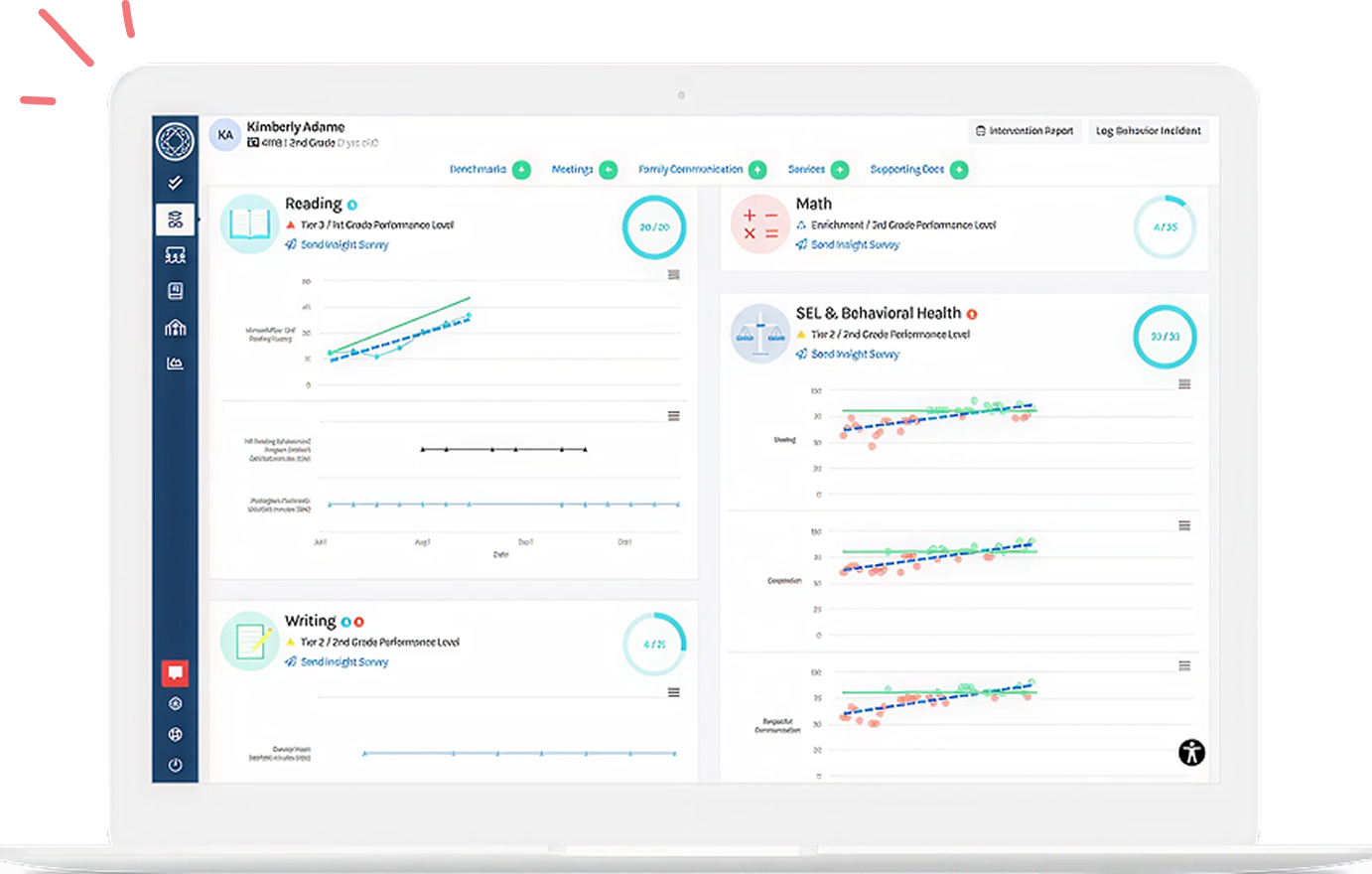

In a healthy RTI/MTSS practice, a data-driven approach is not only important for guiding decisions for individual student needs, but it’s also critical for evaluating the quality and impact of the practice at the school and district level. We recommend that school and/or district leadership meet three times a year, following the administration of universal screening assessments, to reflect on and evaluate their practice. The goal of this meeting is to understand the health of school-level MTSS/RT practice by looking at the percent of students who are adequately being served by the core, the level of instruction across demographics, and improvement in student outcome measures since the last meeting. These metrics are used to evaluate the quality of practice across tier 1, 2, and 3 levels of support and guide school-level improvement plans.

.png?width=400&name=Facebook%20posts%20(5).png)

![[Guest Author] Dr. Eva Dundas-avatar](https://www.branchingminds.com/hs-fs/hubfs/Team/Eva-2-1.jpeg?width=82&height=82&name=Eva-2-1.jpeg)

About the author

[Guest Author] Dr. Eva Dundas

Dr. Dundas has a Ph.D. in Developmental and Cognitive Psychology from Carnegie Mellon University where she conducted research on how the brain develops when children acquire visual expertise for words and faces. Her research also explores how the relationship between neural systems (specifically language and visual processing) unfolds over development, and how those dynamics differ with neurodevelopmental disorders like dyslexia and autism. She has published articles on that subject in the Journal of Cognitive Neuroscience, Neuropsychologia, Journal of Experimental Psychology: General, and Journal of Autism and Developmental Disorders. Dr. Dundas also has a M.Ed. in Mind, Brain, and Education from Harvard University; and a B.S. in Neuroscience from the University of Pittsburgh.