Many educators are aware of the importance of promoting students’ social-emotional skills and how this can be done through well-coordinated and implemented social-emotional learning (SEL) programs and practices. But whether or not these approaches are being implemented effectively and the level of impact they are having on student outcomes can be a bit more difficult to determine.

Evaluating the Progress and Impact of SEL: Key Takeaways

- Measuring the progress and impact of SEL programs, interventions, and practices is a critical component of an effective multi-tiered system of supports.

- The data being collected to monitor and assess impact should be directly aligned with the programs being implemented.

- Collecting and reviewing these data on a regular and determined schedule will provide insight into the efficacy and effectiveness of your SEL initiatives across all levels of support.

It is often assumed that sophisticated data collection and analysis methods are required to figure out what is working and why. It’s true that high-quality data can help answer some of these questions, but there are many practical steps that educators and administrators can take toward having a better understanding of how their SEL initiatives are going.

Below we outline why exactly measuring these things is important and the steps that can be taken to ensure your school or district is effectively evaluating progress and impact. We also address commonly asked questions that come up when working with educators throughout this process, as well as critical indicators of progress and impact and how they can be monitored and measured.

Why Is Evaluating the Progress and Impact of SEL Important?

One of the core components of an effective multi-tiered system of supports is data-based decision-making. This includes using data to determine whether or not an intervention, support, or program is having its intended impact on student learning and development. Districts and schools invest a lot of time, energy, and resources into SEL. Teachers’ time is incredibly limited and valuable; they should be focusing on things that are making a difference. If their efforts are not having an impact, they should know sooner rather than later so they can make necessary changes.

Measuring progress and impact can also serve as a motivating factor for educators and teachers, especially those directly involved in implementing SEL. Being able to see how a program or practice is impacting the behaviors and skills of students can show educators that their efforts are worthwhile and can make a difference for their students.

Deciding on Data

When trying to evaluate the progress or impact of an SEL program or intervention, you need to first decide how exactly you will measure progress. This includes determining which instruments, tools, or measures you will be using. For example, will you rely on teacher observations or reports of student behavior and skills? Or will you use student self-reports? These tend to be the most common forms of student SEL assessment, but each has pros and cons (see this blog post for more details on measuring SEL).

The data you plan on collecting should also be aligned with the intervention/program being implemented. For example, if your school is using a program that teaches students problem-solving and relationship-building, you shouldn’t be measuring progress using an assessment focused on grit or growth mindset.

When examining the components of the program or intervention, try to identify what exactly is being taught and what the outcomes should look like, in terms of observable behaviors in students. Would you expect to see improvements in social skills, cooperation, communication, academic engagement, or other competencies? Ensure that the data you collect is directly linked to the competencies you hope to build and promote.

Finally, outline a timeframe for data collection and program implementation. If possible, it is ideal to collect data before or as close to the beginning of implementation as possible. This will give you a reliable assessment of students’ baseline competencies. It can also help you determine which sub-skills or areas of SEL students need the most help, and perhaps the program or intervention can be modified to meet those specific needs.

If you are measuring progress on a shorter time frame for individuals or small groups of students (i.e., students receiving Tier 2 or 3 support) you will most likely need to collect data more frequently in order to detect changes in student behavior. If you are trying to get a sense of impact across a larger group of students (e.g., for an entire classroom, grade level, or school), you can spread out your data collection, as significant changes probably won’t be noticeable until the program has been implemented for 2-3 months.

Common Questions Related to Data Collection

1. What if we do not have an SEL assessment or survey at our school?

If your school or district doesn’t have or use a social-emotional or behavioral survey, assessment, or screener, you can look for other sources of data that you might already be collecting. For example, behavior citations/incidents, attendance, and suspension rates could be used as alternative ways to measure impact. Again, you want to ensure that the program, practices, or interventions you are using address these types of issues, or skills associated with these types of behavioral outcomes.

Also, data doesn’t always have to be quantitative. Schools can collect qualitative data and feedback from teachers and students on their perspectives, what they learned, and what they liked and did not like about a program. These types of data provide important information on the feasibility and acceptability of an SEL program among students and staff. Data can be collected through open-ended surveys, questionnaires, and focus groups.

2. What if doing data collection more than once a year is not feasible?

Collecting school-wide social-emotional and behavioral data at least two times across a school year is the recommendation, but sometimes this is not feasible, especially for schools just starting out with this type of work. It is also possible to compare progress and outcomes on a yearly basis. When using this approach, educators should make sure their goals and expectations are adjusted. It might be more difficult to see large impacts when they are only being measured once per year. It is important to also consider other factors that might have taken place over the course of the calendar year and have affected students’ social-emotional and behavioral development. For example, changes in staff, events in the school or community, changes in budgets, etc., can all impact students.

Another option is to do more frequent data collection with a subset of students. For example, a school might decide on screening the entire population for social-emotional and behavioral competencies once a year but do 1-2 additional screenings, or more frequent progress monitoring, with students who are at a higher risk and who might also be receiving more targeted interventions and supports. Ultimately, these types of decisions on who should be screened and how often will depend on the needs of each district and school.

Measuring Implementation

The progress and impact of an SEL program or intervention directly reflect its implementation. The most well-researched and high-quality SEL programs can fail to impact students because of poor implementation. This is why measuring the quality and fidelity of implementation is essential. There is a range of ways to measure implementation.

Some SEL programs provide fidelity checklists that teachers can complete on a daily or weekly basis. Even if they are not provided, it is ideal to have teachers or other staff members who are implementing a program keep track of their implementation as well as document any issues with implementation. This should be communicated to educators not only for accountability purposes but also to gain insight into how the program is going, if students seem to be engaged and responding to it, and what challenges they are facing. This information can help identify if any changes or adjustments are needed.

Sometimes a program can be difficult to implement, and teachers require additional training or resources. This is all important information to document and collect and will provide insight into the progress and impact that the intervention will have on students.

Evaluating Progress and Impact

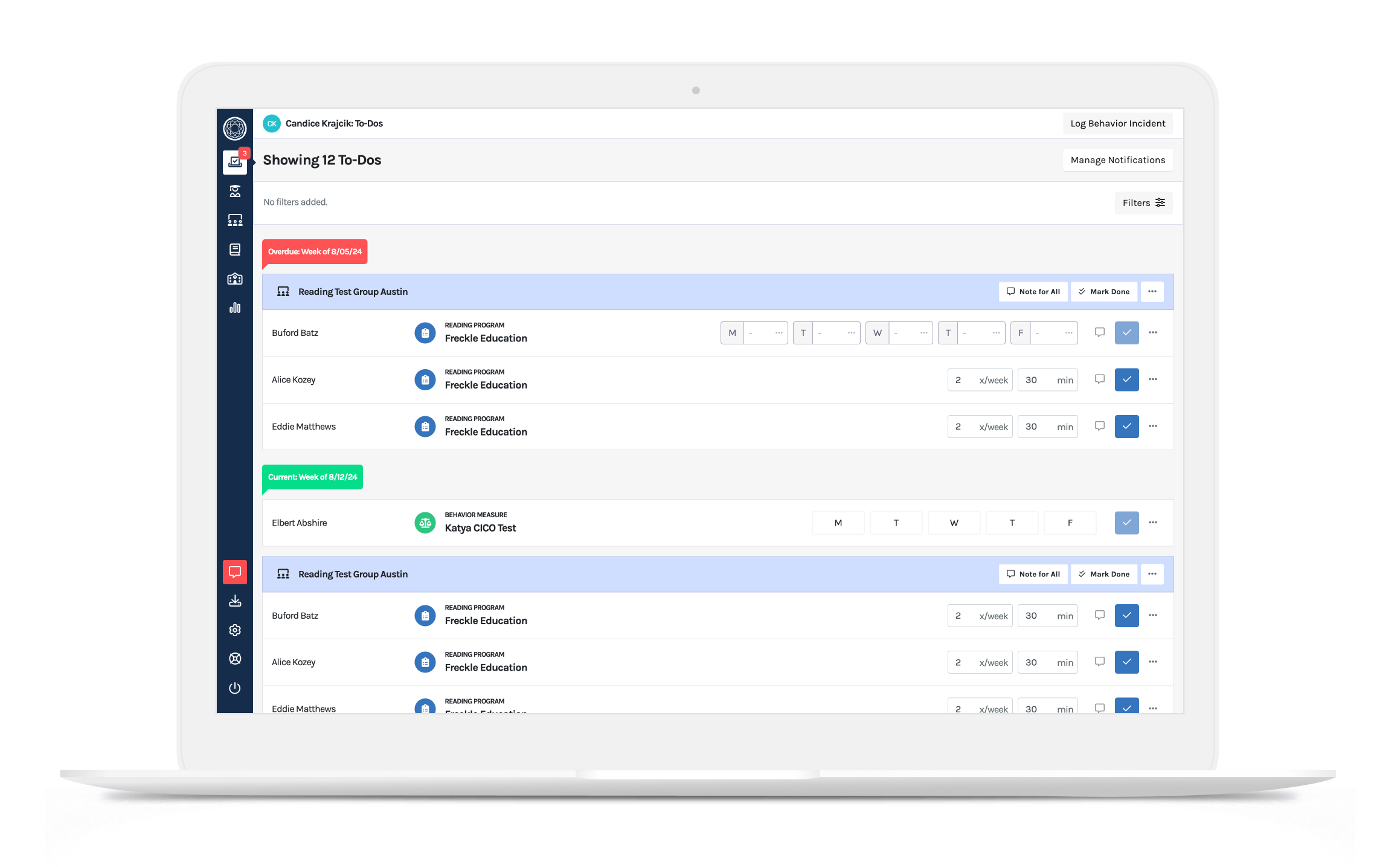

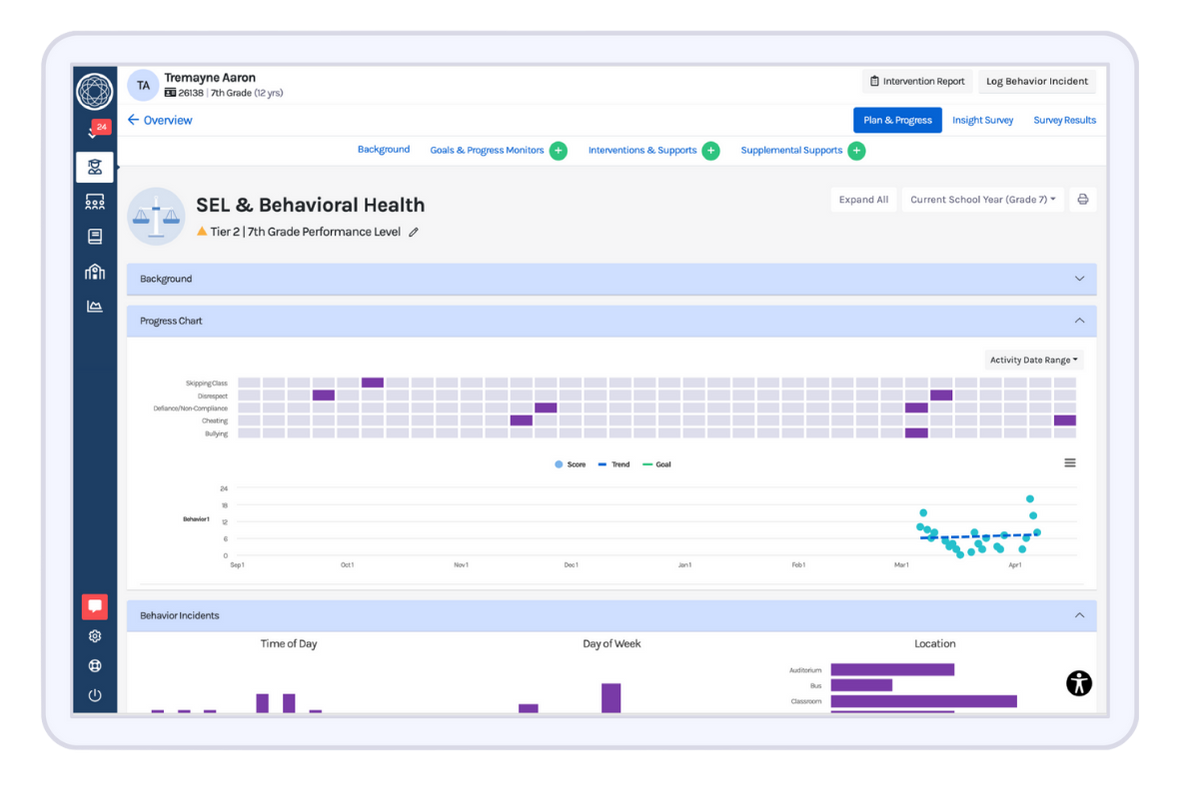

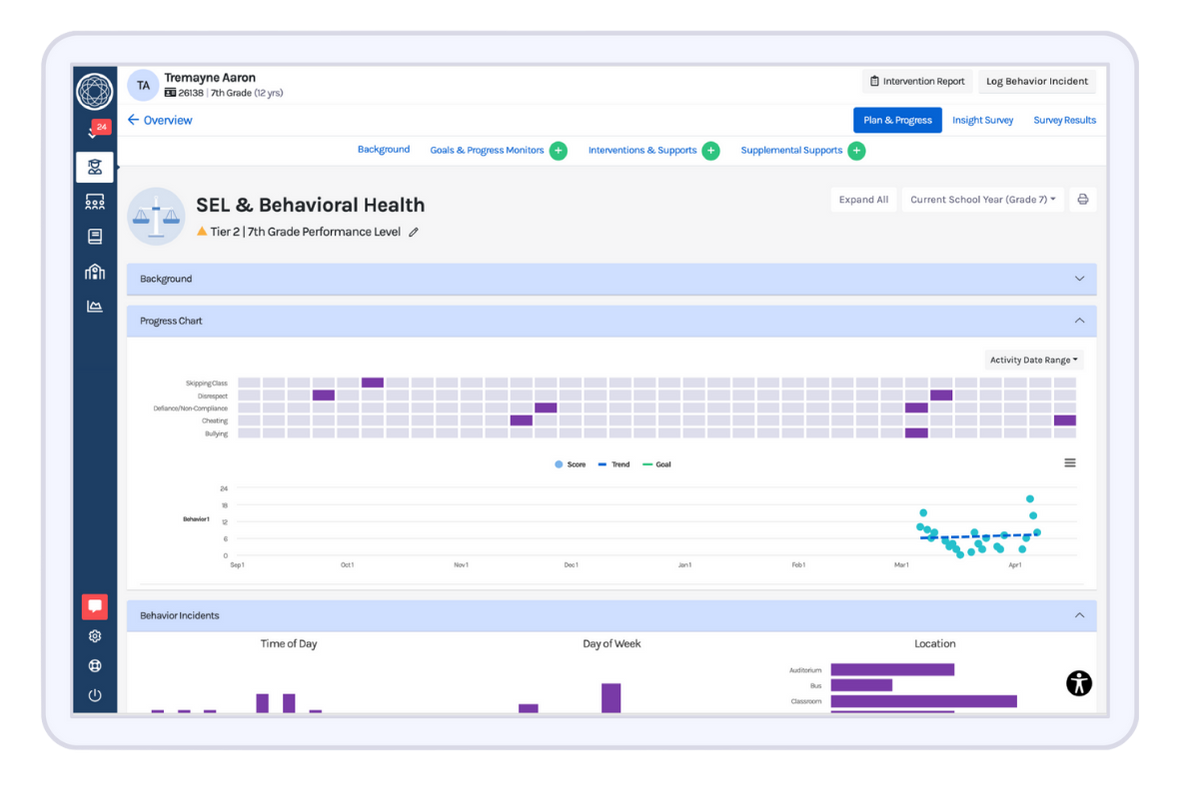

For individual students receiving targeted interventions addressing specific behaviors or skills, tracking daily behaviors can help indicate whether or not progress is being made. In these types of situations, usually involving Tier 2 or 3 levels of support, you want to be collecting data frequently—if students are not improving, you want to know sooner rather than later. Examples of this include a daily behavior tracker or a check-in/check-out sheet where students receive a score or number of points based on the behaviors displayed throughout the day or during a class period.

As mentioned above, the behaviors being monitored should be directly aligned with the intervention and support that is being provided to the student. It is also ideal to use a standardized and consistent scale across all staff who will be completing the ratings as well as an identified SMART goal. These components will make it much easier to review progress and make decisions regarding the next steps for the student.

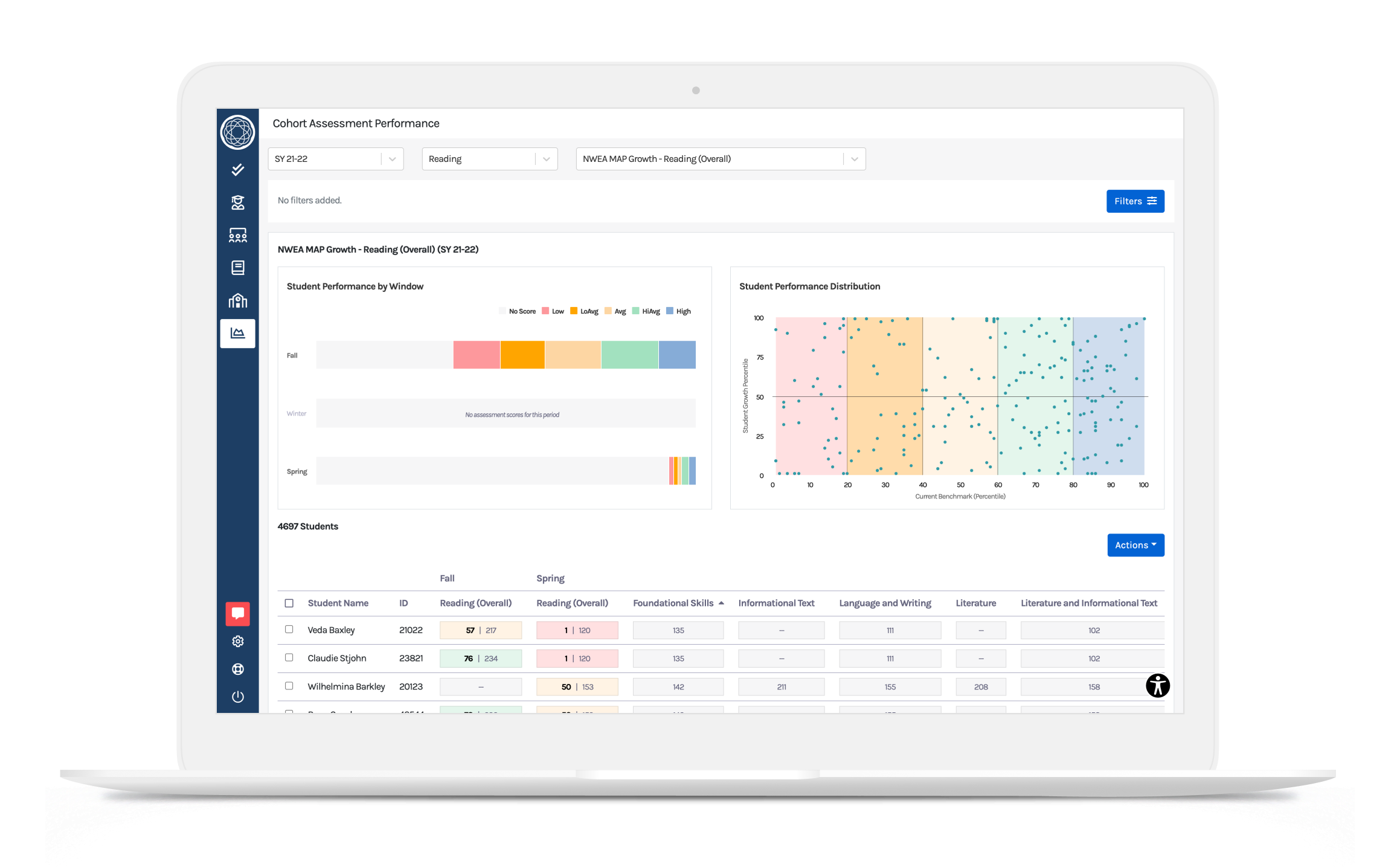

For larger groups of students (e.g., classrooms, grade levels, and campuses), data can be reviewed less frequently, ideally 2-3 times a year. There are different ways to measure overall progress and impact, and this will also depend on the data you collect.

When using an assessment or screener that has levels associated with scores, a good approach is to examine movement across those different categories or levels. For example, looking at how many students have moved from the high-risk category to the moderate or low-risk one, as well as how many students moved from the moderate or low-risk category to a high one.

If you have someone on your data team who is knowledgeable in inferential statistics, you can conduct a paired sample t-test to determine whether or not the overall change in student scores is statistically significant. Results from qualitative data (e.g., open-ended surveys, focus groups, feedback) should also be reviewed and organized into common themes, also known as content analysis.

Another essential step is to review data and outcomes by subgroups within your larger population. In addition to looking at impact by grade levels and classrooms, data should be examined by demographic factors, such as gender, race, ethnicity, IEP status, special education status, ELL status, and free/reduced-priced lunch (an indicator of socioeconomic status).

It is quite common for SEL programs to have differential impacts on students depending on their level of initial need, but if there are groups of students who are not responding to an intervention, it could mean that the program or its implementation is not appropriate for that given group. Making linguistic or cultural adaptations to a program may be necessary.

Adjust Expectations Around Growth & Student Outcomes

Finally, when reviewing data, remember that even SEL programs with the strongest evidence base usually have a small to moderate impact on student outcomes. Therefore, it is important to adjust expectations. Even a small amount of growth or progress is great and can be a sign that things are moving in the right direction. It can also take some time for the skills that are being taught in SEL programs, interventions, and practices to become observable changes in student behavior.

On the other hand, if you are not seeing any growth or changes take a step back and carefully review the program or intervention and its implementation. Be sure to also review the measures and data you are using to rule out any potential issues with reliability and validity. Effective data-based decision-making means using data to figure out where the issues lie and what kinds of adjustments or changes need to be made in order to drive student growth, learning, and development of critical skills and competencies.

Evaluating Your SEL Initiatives

Develop a plan for how your district or school will measure the progress and impact of your SEL programs, interventions, and practices this school year. If a plan has already been created (and is underway), review the assessments, tools, and other forms of data you will be collecting as well as the methods you plan on using to assess implementation, progress, and overall outcomes. Finally, ensure that all necessary stakeholders are involved in the decision-making process around which data will be collected and how it will be shared and reviewed.

About the author

Dr. Essie Sutton

Essie Sutton is an Applied Developmental Psychologist and the Director of Learning Science at Branching Minds. Her work brings together the fields of Child Development and Education Psychology to improve learning and development for all students. Dr. Sutton is responsible for studying the impacts of the Branching Minds on students’ academic, behavioral, and social-emotional outcomes. She also leverages MTSS research and best practices to develop and improve the Branching Minds platform.

Behavior Documentation Reimagined 💫

Ready to rethink behavior documentation? Branching Minds helps turn incident data into meaningful action with tools that simplify the process and empower educators.

.png?width=1436&height=956&name=How%20To%20Understand%20Progress%20and%20Impact%20of%20an%20SEL%20Program%20or%20Intervention%20(preview).png)

.png?width=1000&height=500&name=Universal%20Social-Emotional%20and%20Behavioral%20Screening%20Using%20Data%20to%20Guide%20Decision-Making%20(preview).png)

.png?width=716&height=522&name=Behavior%20Progress%20Monitoring%20(Preview).png)

.png?width=716&height=522&name=Understanding%20Literacy%20Basics%20(Preview).png)

.png?width=716&height=522&name=Tier%203%20Behavior%20Support%20Planning%20(preview).png)