So, you have identified students needing a support plan, created goals, selected and implemented appropriate interventions, and collected data using a progress monitoring tool or assessment. Fantastic! These are all necessary steps to supporting students through your Multi-Tiered System of Supports (MTSS).

But, NOW WHAT? How do you know if the intervention is actually “working”?

In my career as an instructional coach, helping teachers implement intervention plans with struggling students, it all came down to the data. The starting point was setting Specific, Measurable, Attainable, Relevant, and Timebound, or SMART, goals. This helped us determine what specific data to consider and the criteria for success.

MTSS Intervention Plan: Key Takeaways

- It’s all about the data, particularly the Rate of Improvement (ROI) data. Decision-making needs to be objective and data-driven.

- Keep your eyes on the intervention. Ensure the intervention is being implemented with fidelity.

- Consider the SMART goal, progress monitor, and the intervention itself when making adjustments to a plan.

Guiding Questions

Part of this work is about determining the “just right” level of intervention, and like Goldilocks, there is a time to make adjustments. Just as Goldilocks collected data along the way to find what worked for her, with an intervention plan, there will be adjustments as data is collected.

The right set of questions can help you and your MTSS support team make informed decisions about student progress and next steps. This collaborative practice also helps determine effective instructional practices and interventions for the future.

1. How Many Data Points Do You Need?

It is a best practice to gather at least three progress monitoring data points before the plan is reviewed for effectiveness. Why three? Because after three data points are gathered, it is possible to calculate a trend line that shows the Rate of Improvement (ROI), which allows us to see how the student is growing in the specific skill. If growth is uncertain based on the three data points, wait for more data points before making any decisions.

Keep in mind that the data may vary and be higher and lower than the goal line due to various factors, including testing environment, the time of day the student is monitored for progress, fatigue or illness, etc. For example, if the student takes the assessment in a classroom versus in a quiet library, or before lunch versus after lunch, the student might perform differently. For this reason, more data is better! At the same time, you don’t want to wait too long if the intervention isn’t providing what the student needs.

Strike a “just right” balance of data collection — not too much and not too little — and be sure that your progress monitoring tools are standards-aligned and based on the skill and goal area in need of improvement.

2. What Is the Student’s Rate of Improvement (ROI)?

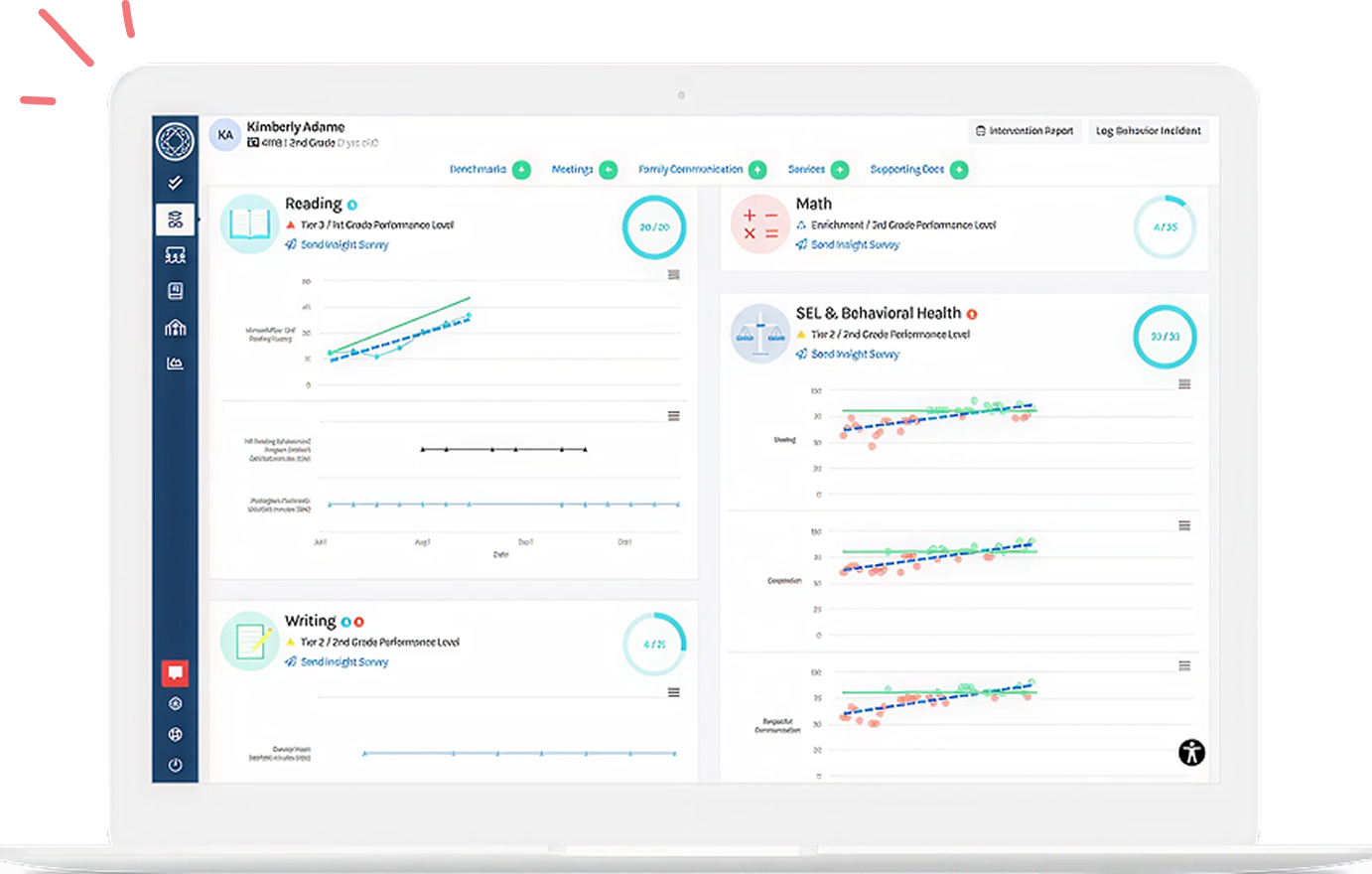

As you look at the progress monitoring data, what is it telling you? Calculate the Rate of Improvement (ROI) to visually analyze the progress a student is making toward their identified SMART goal. An MTSS software platform such as Branching Minds makes it easy to see this trend line, along with the goal line, to identify whether a student is responding to intervention. If the student’s ROI is at or above the goal line, the student is on track to meet the goal.

- If it is difficult to see a pattern and the ROI is uncertain, take a close look at intervention fidelity and ensure that you have collected enough data points.

- If the rate of improvement is below the goal line, check the intervention fidelity. If fidelity is adequate, the intervention is not working for the student, and the plan should be adjusted or changed.

Guidance When Making Decisions about Student Progress with Tier 2 or Tier 3 Plans

Tier 2 Decision Rules

|

Performance Level |

Growth/Rate of Improvement |

Decision |

|---|---|---|

| 3 consecutive PM data points at or above 25th percentile goal line |

Sufficient Growth |

Move to Tier 1: Discontinue or fade out Tier 2 targeted small-group instruction |

| PM data consistently between 10-25th percentile |

Sufficient Growth |

Stay in Tier 2: Maintain the current Tier 2 targeted small-group instruction for another cycle |

| PM data consistently between 10-25th percentile |

Uncertain Growth |

Stay in Tier 2: Revise the current Tier 2 targeted small-group instruction and implement for another cycle |

| 4 consecutive PM data points between 0-9th percentile |

Uncertain or Insufficient Growth |

Move to Tier 3: Increase intervention intensity to reflect Tier 3 level of support and implement for another intervention cycle |

Tier 3 Decision Rules

|

Performance Level |

Growth/Rate of Improvement |

Decision |

|---|---|---|

| 3 consecutive PM data points at or above 10th percentile |

Sufficient Growth |

Move to Tier 2: Revise plan to reflect Tier 2 targeted small-group instruction, and implement for another cycle |

| PM data consistently below 10th percentile |

Sufficient Growth |

Stay in Tier 3: Maintain the current Tier 3 intervention for another cycle |

| PM data consistently below 10th percentile |

Uncertain Growth |

Stay in Tier 3: Revise the current Tier 3 intervention and implement for another intervention cycle |

| PM data consistently below 10th percentile |

Insufficient Growth |

Consider Special Ed Referral: Review criteria and schedule referral meeting with team and parents |

*The “percentile” represents the comparison of the student’s growth to what is average for the grade level.

Related Resource: How To Use Progress Monitoring Data To Guide Decision-Making in an MTSS Practice

3. What Adjustments Need To Be Made?

Before adjusting plans, each component of the plan should be analyzed. Below are some considerations given each piece of the plan:

- SMART Goal: When reviewing plans, you can ask, does the goal align with the needs of the student? Is the student aware of what the goal is and how to reach it? Make this explicit! Your goal should also be aligned with the norming chart based on the progress monitoring assessment used. Remember, this is where our support begins when building support plans. Understanding the student's needs and identifying a specific skill needing improvement is a crucial first step.

- Progress Monitoring: Does the progress monitoring tool or assessment align with the goal? As mentioned previously, progress monitoring needs to be consistent and standards-aligned. When building our math support plans for a group of sixth graders, we used weekly short quizzes aligned to the SMART goal. If students were showing progress on the quizzes, we knew we could continue the intervention. If they weren’t successful, we would discuss why the student might be struggling as a team. When analyzing student data in MTSS meetings, we reviewed multiple pieces of data to determine growth. For example, I recently worked with a school to review a student’s progress monitoring data as well as their district benchmark data, NWEA Measure of Academic Progress (MAP) scores, and qualitative data from the classroom teacher to determine the student’s progress.

- Intervention Fit: One size does not fit all when selecting an intervention. Ensure that the intervention is research-based and targeted to the specific skill deficit of the student, and is culturally relevant. Also, consider whether there is an underlying issue such as a more foundational skill that the student has not yet acquired. One Branching Minds partner school saw huge gains in a student’s iReady growth. What was the change? The student was receiving intervention in their first language (Spanish) and was responding very positively based on the progress monitoring data.

- Intervention Fidelity: The problem-solving team should consistently track the effectiveness of interventions. Documentation of the implementation dates, number of minutes, and any other notes may be kept in logs and reviewed to help analyze the integrity of an intervention plan. In addition, MTSS support personnel should periodically observe the intervention in action to identify any improvements needed.

In my work as an instructional specialist, our team gave at least six weeks to implement before deciding whether the plan worked for the student, but I found it very helpful to directly observe students with our interventionists and offer feedback on their intervention implementation throughout that 6-week period. Observing the intervention in progress allowed me to see what was working and what small adjustments might be needed.

- Are additional support and accountability required, such as professional development or coaching?

- Is the plan feasible, or should it be adjusted to reflect available resources and the student's needs?

- Does the cadence or length of the intervention need to be adjusted?

Making Data-Driven Decisions in MTSS

Regardless of the type of intervention or progress monitoring tools used, an objective look at the data should be the guiding factor in making decisions about the effectiveness of an intervention plan. A problem-solving team that meets consistently to analyze data can understand what is working in an intervention plan and what needs to be adjusted.

Be a ‘Goldilocks” with your adjustments—intervention plans are meant to be about finding the “just right” support to help the student make progress, and the Rate of Improvement provides an objective guideline for these adjustments.

The data gathered through the MTSS process provides the best means of evaluating success, but gathering and analyzing this data can be confusing and time-consuming. Branching Minds helps educators get clarity and save time by doing the data tracking and analysis for you.

![[Guest Author] Lisa Fik-avatar](https://www.branchingminds.com/hs-fs/hubfs/Imported_Blog_Media/Lisa%20Fik%20-%20cropped%20w%20blue%20frame.png?width=82&height=82&name=Lisa%20Fik%20-%20cropped%20w%20blue%20frame.png)

About the author

[Guest Author] Lisa Fik

Lisa Fik is a Consultant with Branching Minds with 13+ years as an educator at the middle school level. With experience in large public schools, a small public charter school, and an international school in Shanghai, China, Lisa has supported an array of diverse learners. As an instructional coach, she has supported teachers in math for grades 5-8, Algebra 1, and Geometry. As a classroom teacher, she has taught grades 6th-8th and Algebra 1. As an instructional coach, she built an MTSS program from the ground up supporting sixth graders in math. In addition, Lisa has supported site, district, and network professional development to support the mission and vision of network goals. Lisa holds a Bachelor of Science from Illinois State University in middle level education (specializing in math and English), a Master of Science from National University in Applied School Leadership, and a preliminary administrative credential.

Your MTSS Transformation Starts Here

Enhance your MTSS process. Book a Branching Minds demo today.

.gif?width=1716&height=873&name=Guide%20to%20Interventions_resources%20and%20guides%20sm%20promo%202%20(1).gif)

.png?width=716&height=522&name=Reading%20Interventions%20(Preview).png)